overwrite在hive内部表及外部表特性

overwrite在hive内部表及外部表特性

overwrite在hive内部表及外部表特性。overwrite即为重写的意思, 指定了OVERWRITE,会有以下效果:

•目标表(或者分区)中的内容(如果有)会被删除,然后再将 filepath 指向的文件/目录中的内容添加到表/分区中。

•如果目标表(分区)已经有一个文件,并且文件名和 filepath 中的文件名冲突,那么现有的文件会被新文件所替代。

一、内部表测试

1. 内部表建表语句:

create table tb_in_base

(

id bigint,

devid bigint,

devname string

) partitioned by (job_time bigint) row format delimited fields terminated by ',';

create table tb_in_up

(

id bigint,

devid bigint,

devname string

) partitioned by (job_time bigint) row format delimited fields terminated by ',';

2. 以下为load overwrite 相关测试

测试数据:

•目标表(或者分区)中的内容(如果有)会被删除,然后再将 filepath 指向的文件/目录中的内容添加到表/分区中。

•如果目标表(分区)已经有一个文件,并且文件名和 filepath 中的文件名冲突,那么现有的文件会被新文件所替代。

一、内部表测试

1. 内部表建表语句:

create table tb_in_base

(

id bigint,

devid bigint,

devname string

) partitioned by (job_time bigint) row format delimited fields terminated by ',';

create table tb_in_up

(

id bigint,

devid bigint,

devname string

) partitioned by (job_time bigint) row format delimited fields terminated by ',';

2. 以下为load overwrite 相关测试

测试数据:

[hadoop@mwtec-50 tmp]$ vi tb_in_base

1,121212,test1,13072912

2,131313,test2,13072913

3,141414,test3,13072914

4,151515,test5,13072915

5,161616,test6,13072916

6,171717,test7,13072917

1,121212,test1,13072912

2,131313,test2,13072913

3,141414,test3,13072914

4,151515,test5,13072915

5,161616,test6,13072916

6,171717,test7,13072917

导入hdfs:

hadoop fs -put /tmp/tb_in_base /user/hadoop/output/

tb_in_base表数据导入:

load data inpath '/user/hadoop/output/tb_in_base' into table tb_in_base partition(job_time=030729);

不使用overwrite新增加一条记录:

[hadoop@mwtec-50 tmp]$ vi t1

7,181818,test8,13072918

导入hdfs:

hadoop fs -put /tmp/t1 /user/hadoop/output/t1

导入tb_in_base表:

发现新增一条id为7的记录

测试数据:

[hadoop@mwtec-50 tmp]$ vi t2

8,191919,test9overwrite,13072918

导入hdfs: hadoop fs -put /tmp/t1 /user/hadoop/output/t1

使用overwrite导入:

1. 导入不同分区:

load data inpath '/user/hadoop/output/t2' overwrite into table tb_in_base partition(job_time=030730);

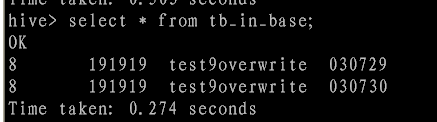

导入后结果:

2. 导入相同分区:

load data inpath '/user/hadoop/output/t2' overwrite into table tb_in_base partition(job_time=030729);

导入后结果:

注:注意区分load data local inpath 及 load data inpath区别。

3. 测试 insert into 与 insert overwrte 区别

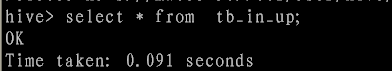

查询tb_in_up目前为空表。

将tb_in_base内部表的数据,通过insert into 到内部表tb_in_up中。

insert into table tb_in_up partition (job_time=030729) select id,devid,devname from tb_in_base limit 1 ;

注:hive的默认数据是default,故有de易做图lt.tb_in_up

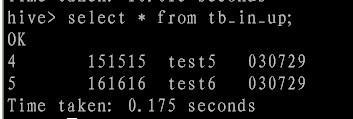

执行后查询结果:

再次执行 insert into table 语句:

insert into table tb_in_up partition (job_time=030729) select id,devid,devname from tb_in_base where id <3 ;

执行后结果新增加id为1、2两条记录:

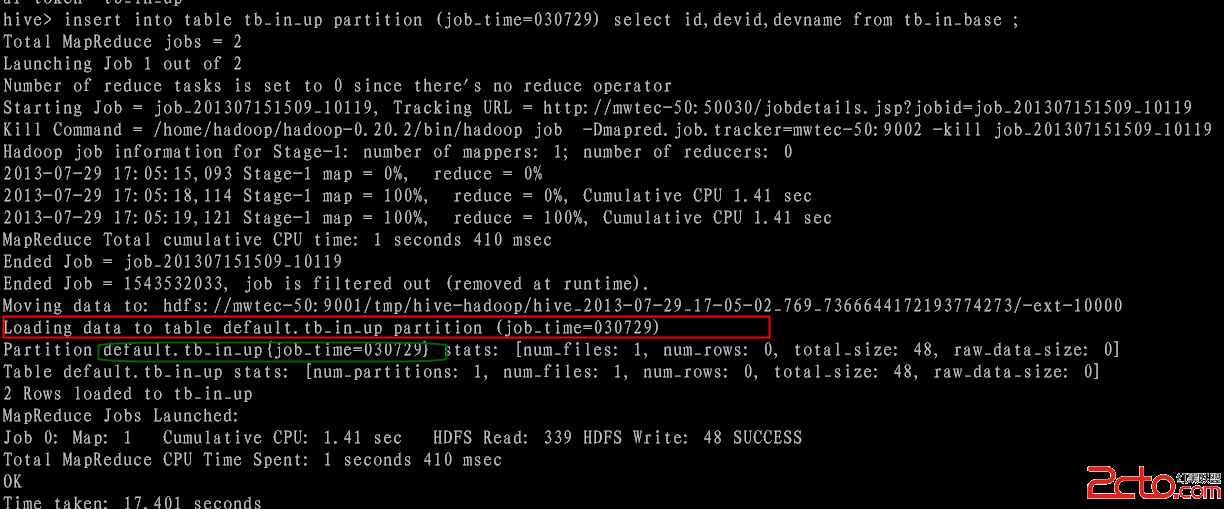

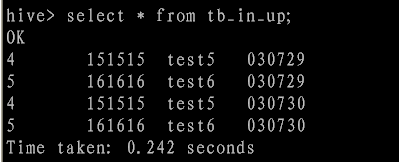

使用overwrite table 测试:

执行语句:

insert overwrite table tb_in_up partition (job_time=030729) select id,devid,devname from tb_in_base where id >3 and id<6 ;

执行过程:

执行结果:

导入其他分区:

执行语句:

insert overwrite table tb_in_up partition (job_time=030730) select id,devid,devname from tb_in_base where id >3 and id<6 ;

操作过程:

执行结果:

结果分析: 使用insert into table 数据只做增加操作;使用insert overwrite table 将删除当前指定的数据存储目录的所有数据(即只会删除指定分区数据不会删除其他分区的数据),再导入新的数据。

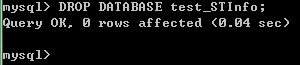

二、mysql 外部表测试

1. 内部表建表语句:

--hive建表语句:

CREATE EXTERNAL TABLE tb_in_mysql(

developerid int,

productid int,

dnewuser int

) row format delimited fields terminated by ',';

CREATE EXTERNAL TABLE tb_mysql(

developerid int,

productid int,

dnewuser int

)

STORED BY 'com.db.hive.mysql.MySQLStorageHandler' WITH SERDEPROPERTIES ("mapred.jdbc.column.mapping" = "developerid,productid,dnewuser" )

TBLPROPERTIES (

"mapred.jdbc.input.table.name" = "tb_mysql",

"mapred.jdbc.onupdate.columns.ignore" = "developerid,productid",

"mapred.jdbc.onupdate.columns.sum" = "dnewuser",

"mapred.jdbc.url" = "jdbc:mysql://192.168.241.94:3307/db",

"mapred.jdbc.username" = "db",

"mapred.jdbc.password" = "db"

);

--mysql建表语句

CREATE TABLE tb_mysql (

`developerid` int(11) NOT NULL DEFAULT '0',

`productid` int(11) NOT NULL DEFAULT '0',

`dnewuser` int(11) NOT NULL DEFAULT '0'

) ENGINE=MyISAM DEFAULT CHARSET=utf8;

测试数据:

1,1212,10

2,1313,9

3,1414,13

4,1515,50

5,1616,70

6,1717,80

7,18818,100

导入hdfs:

hadoop fs -put /tmp/tb_mysql /user/hadoop/output/

导入hive表:

load data inpath '/user/hadoop/output/tb_mysql' into table tb_in_mysql;

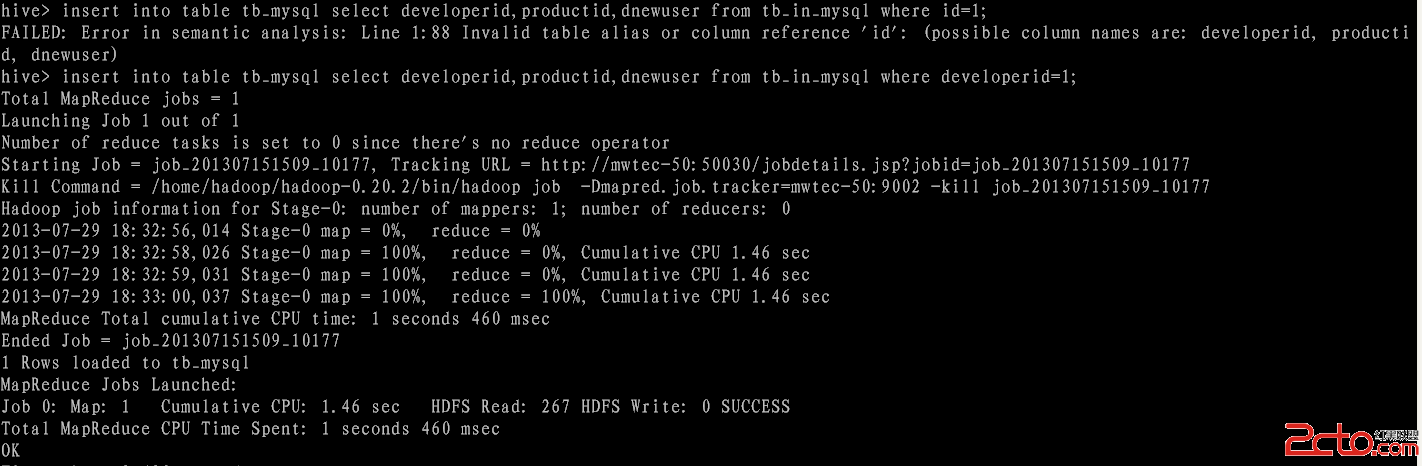

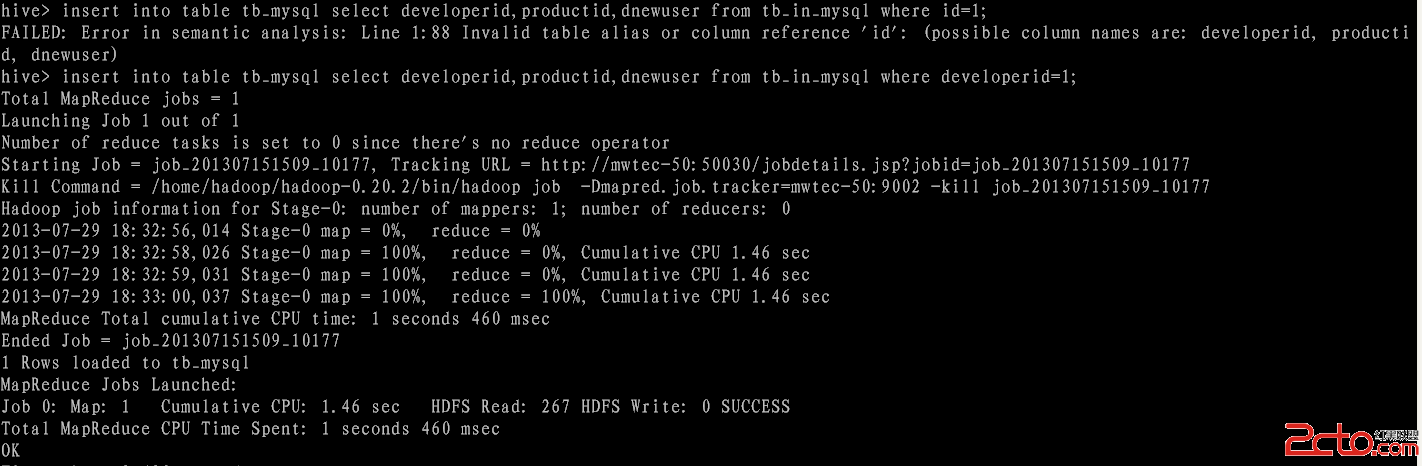

使用insert into table 往外部表tb_mysql写入数据:

insert into table tb_mysql select developerid,productid,dnewuser from tb_in_mysql where developerid=1;

执行过程:

执行结果:

mysql> select * from tb_mysql;

+-------------+-----------+----------+

| developerid | productid | dnewuser |

+-------------+-----------+----------+

| 1 | 1212 | 10 |

+-------------+-----------+----------+

1 row in set

再次执行以下步骤:

insert into table tb_mysql select developerid,productid,dnewuser from tb_in_mysql where developerid=2;

执行结果:

mysql> select * from tb_mysql;

+-------------+-----------+----------+

| developerid | productid | dnewuser |

+-------------+-----------+----------+

| 1 | 1212 | 10 |

| 2 | 1313 | 9 |

+-------------+-----------+----------+

2 rows in set

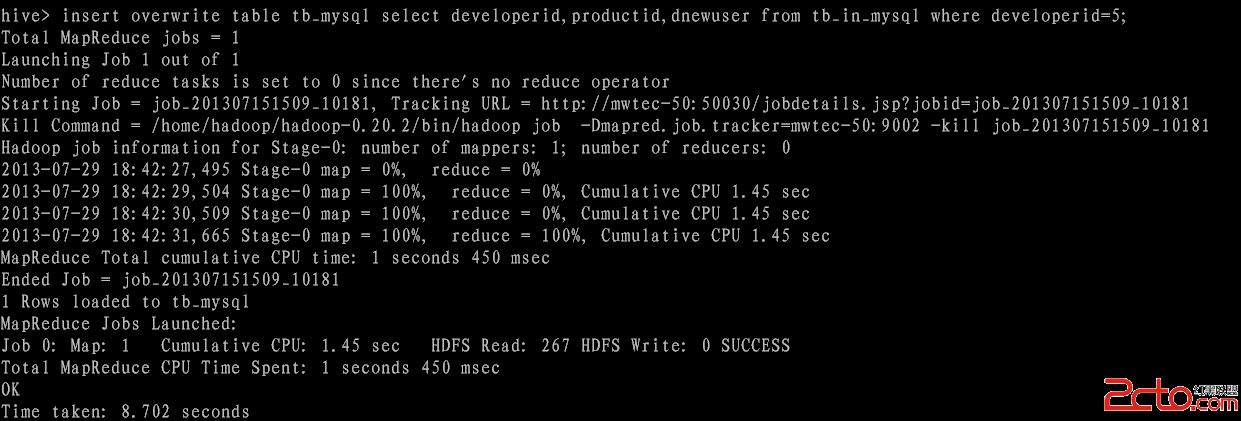

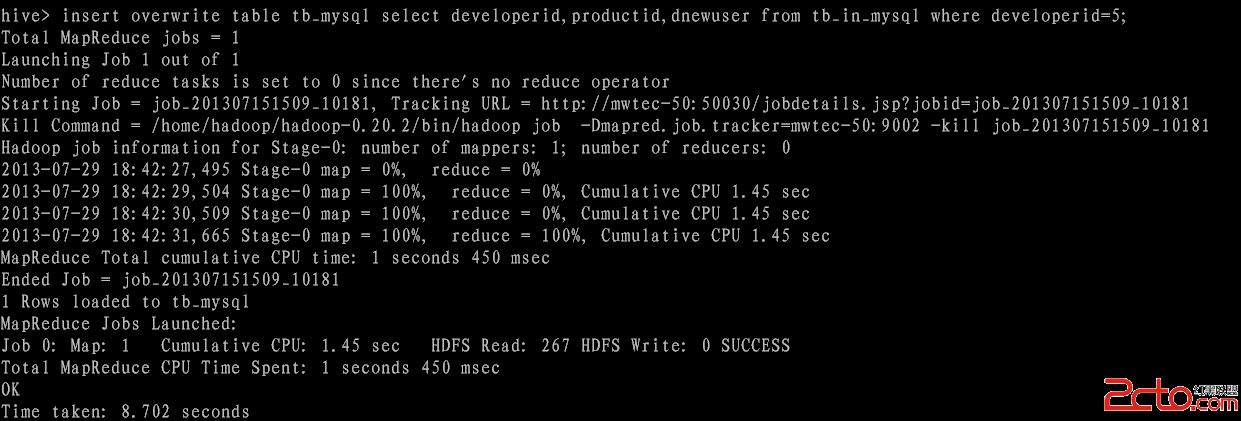

使用overwrite 进行测试:

insert into table tb_mysql select developerid,productid,dnewuser from tb_in_mysql where developerid=5;

执行过程:

执行结果:

mysql> select * from tb_mysql;

+-------------+-----------+----------+

| developerid | produc

--hive建表语句:

CREATE EXTERNAL TABLE tb_in_mysql(

developerid int,

productid int,

dnewuser int

) row format delimited fields terminated by ',';

CREATE EXTERNAL TABLE tb_mysql(

developerid int,

productid int,

dnewuser int

)

STORED BY 'com.db.hive.mysql.MySQLStorageHandler' WITH SERDEPROPERTIES ("mapred.jdbc.column.mapping" = "developerid,productid,dnewuser" )

TBLPROPERTIES (

"mapred.jdbc.input.table.name" = "tb_mysql",

"mapred.jdbc.onupdate.columns.ignore" = "developerid,productid",

"mapred.jdbc.onupdate.columns.sum" = "dnewuser",

"mapred.jdbc.url" = "jdbc:mysql://192.168.241.94:3307/db",

"mapred.jdbc.username" = "db",

"mapred.jdbc.password" = "db"

);

--mysql建表语句

CREATE TABLE tb_mysql (

`developerid` int(11) NOT NULL DEFAULT '0',

`productid` int(11) NOT NULL DEFAULT '0',

`dnewuser` int(11) NOT NULL DEFAULT '0'

) ENGINE=MyISAM DEFAULT CHARSET=utf8;

测试数据:

1,1212,10

2,1313,9

3,1414,13

4,1515,50

5,1616,70

6,1717,80

7,18818,100

导入hdfs:

hadoop fs -put /tmp/tb_mysql /user/hadoop/output/

导入hive表:

load data inpath '/user/hadoop/output/tb_mysql' into table tb_in_mysql;

使用insert into table 往外部表tb_mysql写入数据:

insert into table tb_mysql select developerid,productid,dnewuser from tb_in_mysql where developerid=1;

执行过程:

执行结果:

mysql> select * from tb_mysql;

+-------------+-----------+----------+

| developerid | productid | dnewuser |

+-------------+-----------+----------+

| 1 | 1212 | 10 |

+-------------+-----------+----------+

1 row in set

再次执行以下步骤:

insert into table tb_mysql select developerid,productid,dnewuser from tb_in_mysql where developerid=2;

执行结果:

mysql> select * from tb_mysql;

+-------------+-----------+----------+

| developerid | productid | dnewuser |

+-------------+-----------+----------+

| 1 | 1212 | 10 |

| 2 | 1313 | 9 |

+-------------+-----------+----------+

2 rows in set

使用overwrite 进行测试:

insert into table tb_mysql select developerid,productid,dnewuser from tb_in_mysql where developerid=5;

执行过程:

执行结果:

mysql> select * from tb_mysql;

+-------------+-----------+----------+

| developerid | produc